By integrating data engineering aptitude

This article describes the often overlooked requirements for building and future-proofing highly valuable data science capabilities.

- Why is data capability integration important?

- Achieve integration within your organization

- Overlapping priorities and skill sets for success

It’s important for all data scientists to stay on top of technology trends and tools as the industry evolves. The recent boom in artificial intelligence has brought a lot of attention to emerging technologies such as chatGPT as a large-scale data product powered by LLM, and Github Copilot to help programmers write code with suggestions on the fly. . Of course there are many others.

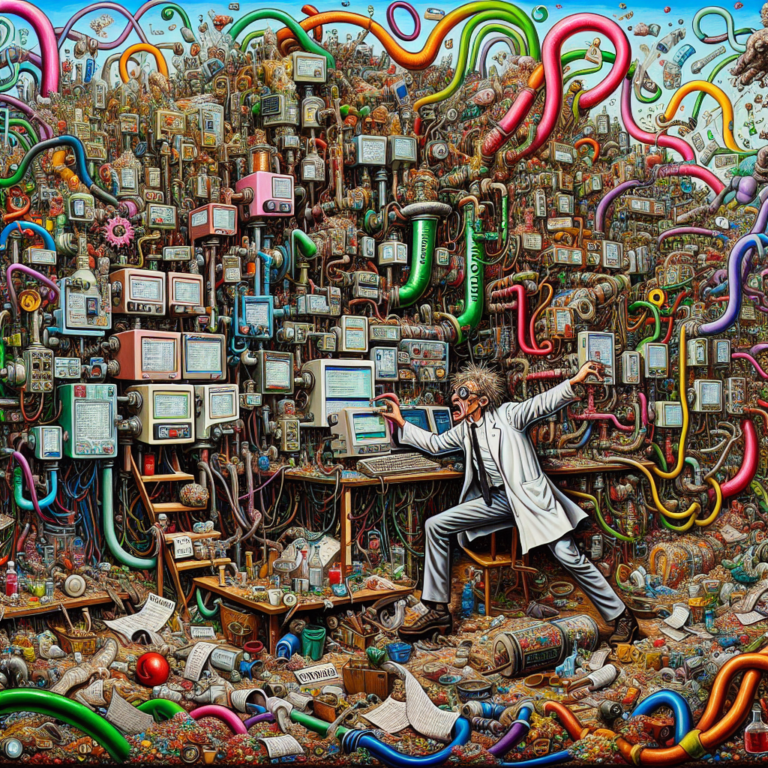

However, the ability of data scientists to take advantage of these new technologies and skills is heavily influenced by the familiar phrase, “garbage in, garbage out.” This concept revolves around the idea that a robust data pipeline is the core of good data science. Although many people understand this to be true, the reality is that data-centric organizations rarely supplement their data science teams with dedicated or even data engineering support.

Unfortunately, the consequences of siloing data science teams from engineering departments create headaches such as:

- Data scientists must wade through oceans of data across an organization’s infrastructure, but they are often not properly prepared. engineer You end up spending a lot of time hacking “quick” fixes because you don’t have access to the resources you need.

- Data engineers encounter “handoffs” of models or code that have very few requirements and provide essential context for efficient deployment into production and maintenance with high-quality support.

- Good (and sometimes expensive to build) data products never make it into the hands of customers.

Therefore, as a key element of your success map for delivering high-value data science…